Guide 104

AI Crawler Access Guide: robots.txt for AI Search

Complete robots.txt allow list for AI crawlers — GPTBot, ClaudeBot, PerplexityBot, Google-Extended, Bingbot. Plus verification and common gotchas.

Last updated: May 2026

The single most common cause of low AI search visibility we see in brand audits has a one-line fix.

A surprising number of brands have Google-Extended: Disallow: / in their robots.txt — usually inherited from a starter template that was overly conservative about AI training. The side effect is that Google's AI Overview is structurally barred from citing the site at all, regardless of content quality, structured data, or organic Google rank.

This guide is the operational reference for AI crawler access — the full crawler list, a copy-pasteable robots.txt allow list, server-side verification commands, and the gotchas that trip up most teams. If you do nothing else for AI search visibility this quarter, fix this.

Why crawler access is the prerequisite for everything

AI search visibility breaks down into three layers — indexed, cited, routable. (For the full layer model, see How to Measure AI Search Visibility.)

Crawler access is the input to layer one. If GPTBot has not crawled your site, your content cannot enter ChatGPT's training corpus. If PerplexityBot is blocked, Perplexity cannot cite you. If Google-Extended is disallowed, Google AI Overview drops you from its citation candidate set.

Crawler access caps the ceiling of every downstream optimization. You can have the best structured data in your category, the freshest content, and the strongest entity graph — and still be invisible on AI search if your robots.txt blocks the crawlers that feed those platforms.

The crawler list — what each one does

Six crawlers matter for AI search visibility today. Each serves a different platform.

Googlebot

Google's primary search crawler. Powers organic Google search rankings. Indirectly, it powers AI Overview and Gemini sourcing — both of those products read from Google's main index, which Googlebot builds.

Powers: Google organic search, Google AI Overview, Gemini

Google-Extended

Google's AI-specific crawler signal. Distinct from Googlebot: this user-agent controls whether Google's AI products are allowed to use your content for AI training and AI surface eligibility. Blocking Google-Extended does NOT block Googlebot — your organic Google rankings are unaffected — but it does disqualify your site from AI Overview citation.

Powers: AI Overview eligibility, Gemini training data

Bingbot

Microsoft's search crawler. Critical for ChatGPT visibility because ChatGPT's web search grounds against Bing's index, not Google's. A brand well-indexed on Google but poorly indexed on Bing will be invisible to ChatGPT regardless of Google rank.

Powers: Bing search, ChatGPT web search, Microsoft Copilot

GPTBot

OpenAI's crawler used to gather training data for future ChatGPT model versions. Allowing GPTBot affects whether your content can become part of trained-knowledge in subsequent training cycles.

Powers: ChatGPT trained-knowledge (long-term horizon)

ClaudeBot

Anthropic's crawler. Builds training data for future Claude model versions.

Powers: Claude trained-knowledge

PerplexityBot

Perplexity's own crawler. Builds Perplexity's independent search index.

Powers: Perplexity search

For the full mechanics of each crawler — what it does, how it behaves, version differences — see GPTBot, ClaudeBot, PerplexityBot Explained.

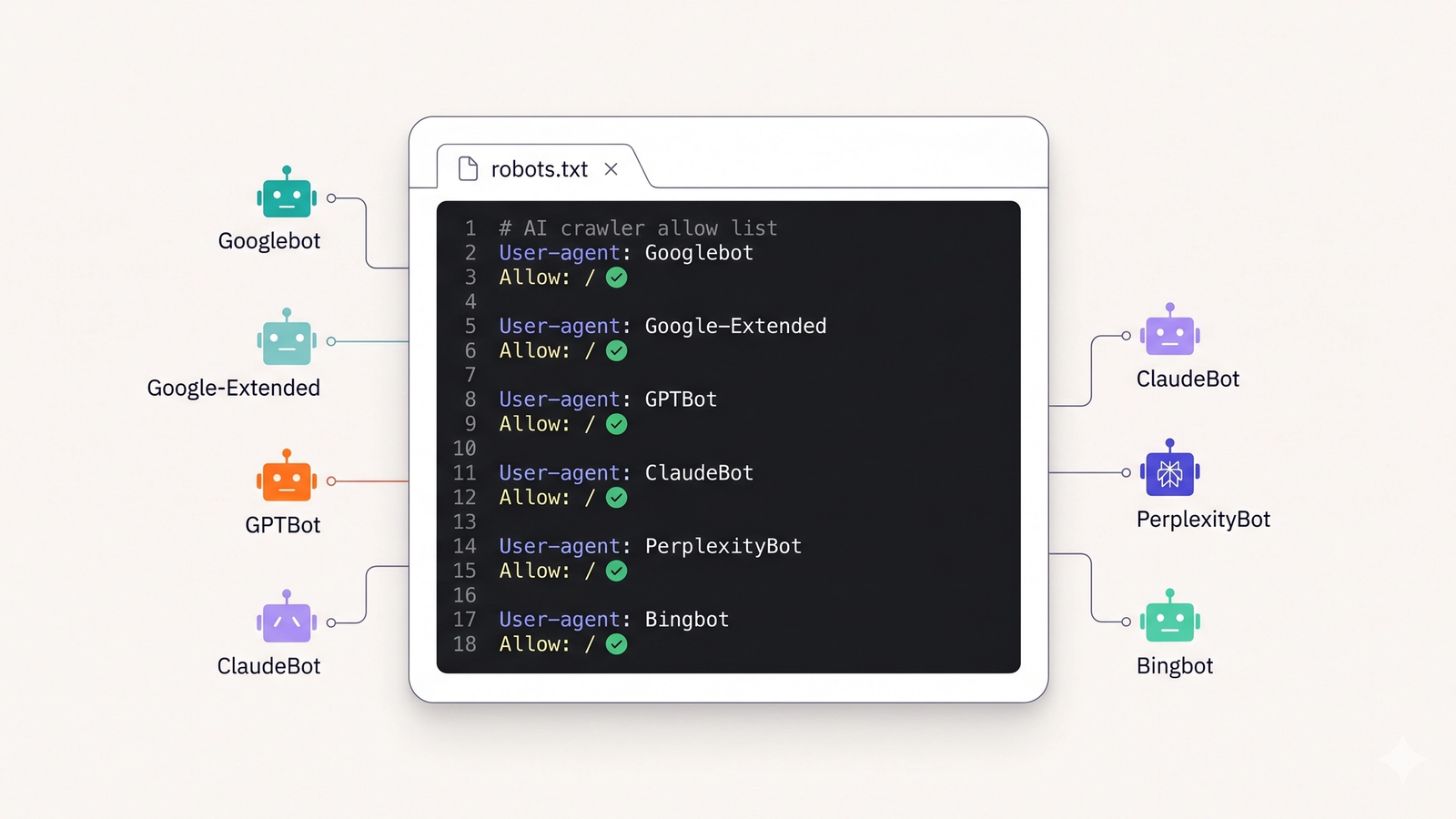

The complete copy-pasteable robots.txt allow list

Drop this into your robots.txt at site root. Replace https://yoursite.com/sitemap.xml with your actual sitemap URL.

# ── AI search crawlers ─────────────────────────────────────────────

# Google

User-agent: Googlebot

Allow: /

User-agent: Google-Extended

Allow: /

# Microsoft (powers ChatGPT web search)

User-agent: Bingbot

Allow: /

# OpenAI (ChatGPT training)

User-agent: GPTBot

Allow: /

# Anthropic (Claude training)

User-agent: ClaudeBot

Allow: /

# Perplexity (Perplexity index)

User-agent: PerplexityBot

Allow: /

# ── Default policy for everything else ─────────────────────────────

User-agent: *

Allow: /

Disallow: /admin/

Disallow: /api/

Disallow: /_next/

Disallow: /private/

# ── Sitemap ────────────────────────────────────────────────────────

Sitemap: https://yoursite.com/sitemap.xmlThe named-bot blocks at the top are explicit allow rules. The User-agent: * block at the bottom is the catch-all default — adjust the Disallow: lines to match your site's private paths.

Optional but recommended additions

Some teams also want to allow the secondary AI crawlers:

# ── Additional AI crawlers (optional) ──────────────────────────────

User-agent: Anthropic-AI

Allow: /

User-agent: CCBot

Allow: /

User-agent: Bytespider

Allow: /

User-agent: Applebot-Extended

Allow: /CCBot is the Common Crawl bot — its dataset is used by many AI training pipelines. Bytespider is ByteDance's crawler. Applebot-Extended is Apple Intelligence's training-data crawler. Allowing these expands your reach into AI products downstream of those datasets.

How to verify crawlers are actually accessing your site

Putting an allow rule in robots.txt does not guarantee that a crawler will visit. You verify by checking server logs.

nginx access logs — quick grep

# Last 7 days of AI bot hits (adjust path to your access log)

zcat -f /var/log/nginx/access.log* | \

grep -E "GPTBot|ClaudeBot|PerplexityBot|Google-Extended|Bingbot|Googlebot" | \

awk '{print $1, $4, $7, $9}' | \

sort | uniq -c | sort -rn | head -50This produces a list of (count, IP, timestamp, URL, status) tuples — useful for spotting which bots are hitting which pages how often.

Apache access logs — same pattern

zcat -f /var/log/apache2/access.log* | \

grep -E "GPTBot|ClaudeBot|PerplexityBot|Google-Extended|Bingbot|Googlebot" | \

awk '{print $9, $7}' | sort | uniq -c | sort -rn | head -50Per-bot summary count

# How many requests from each bot in the last 7 days?

for bot in GPTBot ClaudeBot PerplexityBot Google-Extended Bingbot Googlebot; do

count=$(zcat -f /var/log/nginx/access.log* | grep -c "$bot")

echo "$bot: $count"

doneIf you see zero hits for a bot in the last 7 days even though it's allowed in robots.txt, something is blocking it upstream of your origin server (see CDN/firewall section below).

Vercel-hosted sites

Vercel's runtime logs include user-agent. Filter by Vercel CLI or dashboard. The pattern is the same — grep for the bot user-agent strings.

Cloudflare-fronted sites

Cloudflare Analytics shows bot traffic by user-agent under the "Security" → "Bots" section. If you see traffic at the edge but not at your origin, your origin is somewhere along the path dropping the requests.

Common gotchas that produce zero crawler traffic despite a clean allow list

1. Inherited Disallow: / from a starter template

Some Next.js, Astro, and WordPress templates ship with overly restrictive default robots.txt — typically User-agent: * Disallow: / for staging environments that nobody removed at production launch.

Check: view https://yoursite.com/robots.txt directly in a browser. The first rule wins.

2. Conflicting rules

A User-agent: * block with Disallow: / will conflict with named-bot allows for some crawlers. The standard is most-specific-rule-wins, but implementations vary across crawlers.

Fix: put named-bot allow rules at the top, catch-all User-agent: * block at the bottom, and never Disallow: / in the catch-all unless you mean it.

3. Wildcard quirks

Disallow: /*? looks like it blocks query strings but actually behaves differently across crawlers. Disallow: /*.pdf$ works in some, not others. Avoid wildcards unless necessary; prefer explicit prefix paths.

4. Trailing slash inconsistencies

Disallow: /admin and Disallow: /admin/ mean different things to some crawlers. If you intend to block a directory, use the trailing slash explicitly.

5. Cached robots.txt

Google caches robots.txt for up to 24 hours. Other crawlers cache for varying durations. After updating robots.txt, expect a 24-72 hour delay before crawlers respect the new rules. Patience required.

6. Schema-mismatch from automated robots.txt generators

Some CMS plugins regenerate robots.txt on save and silently revert manual changes. Audit your robots.txt monthly to catch drift.

Beyond robots.txt — CDN and firewall layers

robots.txt is a polite request. CDN-level rules are enforcement.

Cloudflare

Cloudflare's "Bot Fight Mode" blocks all bots aggressively, including legitimate AI crawlers. If you have Bot Fight Mode on, AI bots will be challenged with CAPTCHAs they cannot solve and will appear as zero traffic in your origin logs even though robots.txt allows them.

Fix: in Cloudflare dashboard → Security → Bots, switch off "Bot Fight Mode" or add explicit allow rules for AI crawler user agents and IP ranges.

Vercel

Vercel's default firewall does not block AI crawlers. If you've added custom WAF rules with broad bot-blocking, audit those for AI crawler exclusions.

AWS WAF / Cloudfront

If your origin sits behind AWS WAF, check WAF rules for blanket bot rules. AWS managed rule groups (e.g., AWSManagedRulesBotControlRuleSet) can block AI crawlers by default depending on configuration.

Imperva / Akamai / others

Enterprise WAF/CDN products often have aggressive bot defaults. Audit each one for explicit AI crawler whitelisting.

Rate limiting — the right way

You may want to throttle aggressive crawlers without blocking them. The clean way is Crawl-delay:

User-agent: GPTBot

Allow: /

Crawl-delay: 5This requests a 5-second delay between requests. Most AI crawlers respect it. Note that Googlebot does NOT respect Crawl-delay — for Google, use Google Search Console's crawl rate settings instead.

For server-side rate limiting, return HTTP 429 (Too Many Requests) with a Retry-After header instead of 4xx-blocking. AI crawlers respect 429+Retry-After and back off; they treat persistent 4xx as a permanent block.

Frequently Asked Questions

Will allowing GPTBot let OpenAI use my content for training?

Yes. GPTBot is OpenAI's crawler explicitly used to gather training data for future ChatGPT model versions. Allowing it means your content becomes a candidate for inclusion in future training corpora. If your content is intellectual property you do not want trained on, block GPTBot specifically — but be aware that this also limits your visibility in ChatGPT's trained-knowledge mode.

What is the difference between Googlebot and Google-Extended?

Googlebot crawls for Google's primary search index, which powers organic search rankings and indirectly powers AI Overview and Gemini. Google-Extended is a separate crawler signal that controls whether Google can use your content for AI training and AI surface eligibility. Blocking Google-Extended preserves your organic Google rankings while disqualifying you from AI Overview citation.

How long after updating robots.txt do changes take effect?

24-72 hours for most crawlers. Google caches robots.txt for up to 24 hours. Other crawlers vary. Plan for a 3-day window before re-running an AI search visibility audit to measure the impact of robots.txt changes.

Do I need to allow every AI crawler, or just the major ones?

For most brands, allow the six primary crawlers: Googlebot, Google-Extended, Bingbot, GPTBot, ClaudeBot, and PerplexityBot. The optional secondary crawlers (CCBot, Bytespider, Anthropic-AI, Applebot-Extended) expand reach into downstream AI products and are recommended unless you have specific reasons to block.

What if I use Cloudflare and bots still appear blocked?

Cloudflare's Bot Fight Mode will block AI crawlers regardless of your robots.txt. Disable Bot Fight Mode or add explicit allow rules for AI crawler user agents and IP ranges. Cloudflare's "Verified Bots" list does not yet cover all AI crawlers, so manual whitelisting is sometimes required.

Can I check whether my robots.txt is correctly configured?

Yes — Google Search Console has a robots.txt tester for Googlebot specifically. For other AI crawlers, the practical check is server log analysis a week after deployment (per the verification commands in this guide). If you see crawl traffic from each bot you allowed, the configuration is working.

Should I publish an llms.txt file alongside robots.txt?

llms.txt is an emerging standard separate from robots.txt — it provides AI systems with a curated summary of your site rather than a crawl access policy. It does not replace robots.txt. Adoption is still uneven across AI platforms but publishing one is low-cost and forward-compatible.

Measure your AI crawler health alongside surface rate

robots.txt is the input layer. Surface rate is the output layer. Citare measures both — crawler access health on your site plus your brand's surface rate across Google AI Overview, ChatGPT, Gemini, and Perplexity.

Run your free AI visibility audit → [citare.ai/audit]

See what AI says about your brand

Citare measures your surface rate across ChatGPT, Gemini, Perplexity, and Google AI Overview — and tells you exactly what to fix.

Run your free AI visibility audit →