Guide 105

FAQ Schema for AI Visibility: A Design Guide

How to design FAQ content that earns AI Overview citations — question phrasing, answer length, factual density, common mistakes, validation.

Last updated: May 2026

Among all schema types we deploy across brand audits, FAQPage produces the largest measurable AI Overview citation lift per hour of work. The schema is simple. The leverage is enormous. And most teams waste it.

The reason: most teams write FAQ content like SEO copy — keyword-targeted, slightly evasive, padded for length. AI platforms select FAQ content for citation based on different criteria. Question phrasing that matches conversational queries. Answers that are direct, self-contained, and factually dense. Schema that exactly matches visible page content.

Schema syntax is the easy part — covered in Structured Data and JSON-LD for AI Search. This guide is the design layer: what makes FAQ content actually citable by AI Overview, and the patterns that distinguish a high-citation FAQ page from one that gets ignored. The parent pillar Google AI Overview Optimization provides the broader context.

Why FAQ Schema Dominates AI Overview Citation

Three mechanisms make FAQPage the highest-leverage AIO citation lever.

1. AI Overviews are answers; FAQ schema is pre-formatted answers

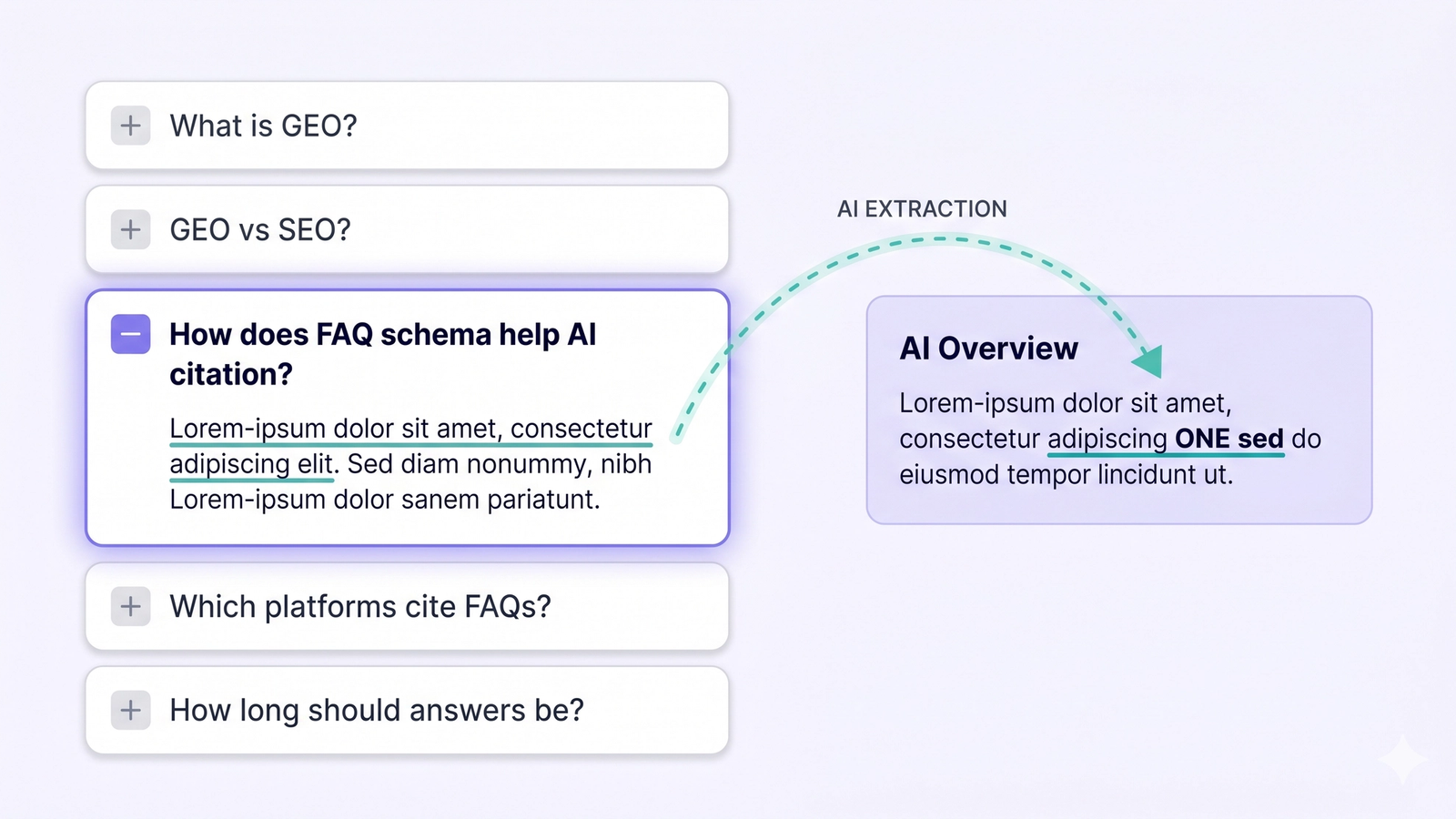

When a user types a question into Google, AI Overview generates a synthesized answer at the top of the SERP. Generating that answer requires extracting authoritative responses from candidate sources. FAQPage schema is the most direct match — it explicitly delivers the model exactly what it needs: a question paired with a canonical answer.

The model doesn't have to infer your answer from prose. It doesn't have to extract the answer from a buried paragraph. The answer is already in acceptedAnswer.text, ready for citation.

2. FAQ schema removes citation ambiguity

When AI Overview evaluates a page for citation, it weighs factors like factual claims, author credibility, content freshness, and source authority. Body-text content adds noise to this evaluation — the model has to decide which sentence is the answer. FAQ schema eliminates that noise. The schema tells the model: "this exact text is the canonical answer to this exact question."

Citation context becomes more reliable when ambiguity is removed. AIO prefers high-confidence sources. FAQ schema is the highest-confidence answer format on the web.

3. Question phrasing matches user query patterns

The third mechanism is selection-side. When a user asks "How does FAQ schema help AI citation?", AI Overview looks for sources that contain that exact question — phrased in the user's voice, not in marketing copy. FAQPage schema lets you anchor specific questions in your content. If your question phrasing matches user query phrasing, your content is far more likely to be selected.

The Three Properties of Effective FAQ Content

Among brands we've audited with FAQPage schema deployed, the difference between low-citation and high-citation FAQ pages is not the schema syntax. The schema is identical. The difference is content design.

Three properties consistently distinguish citable FAQ content:

- Question phrasing matches conversational queries

- Answers are direct and self-contained — front-loaded, not buried

- Answers are factually dense and attributable — specific, not vague

The next sections expand each.

Question Phrasing — Write the Way Users Ask

The most common mistake in FAQ content: writing question titles for SEO keyword targeting instead of for actual user query phrasing.

Compare:

- "Is Mixpanel or Amplitude better for product-led SaaS?" — SEO-style (avoid): "Mixpanel vs Amplitude comparison"

- "How long does it take to see AIO citation improvements?" — SEO-style (avoid): "AIO citation timeline"

- "What's the difference between GPTBot and Bingbot?" — SEO-style (avoid): "GPTBot Bingbot comparison"

- "Should I block AI crawlers?" — SEO-style (avoid): "AI crawler blocking"

The user-style versions match how real users phrase queries when typing into AI assistants. They are full sentences ending in a question mark. They use natural conversational language. They include the exact phrasing buyers use.

The SEO-style versions are keyword strings, optimized for an older search-rank model that scored keyword-density. They do not match how users phrase AI queries. AI Overview is far less likely to select them.

The phrasing test

For every FAQ question, ask: would a real user, talking to ChatGPT or Gemini, type this exact sentence? If the answer is no, rewrite. The phrasing test is the single most useful filter for FAQ design.

Voice-search compatible questions

Voice-search queries are a strong proxy for AI assistant queries. Both are conversational, both are full-sentence, both end in question marks. If your FAQ questions read naturally when spoken aloud, they'll likely match AI query patterns too.

Answer Length — 40 to 100 Words Is the Citation Sweet Spot

We've measured AIO citation rates against FAQ answer length across hundreds of pages. The sweet spot is 40-100 words. Both shorter and longer underperform.

Too short (under 30 words)

Answers under 30 words frequently fail to provide enough context for AIO to reuse the answer in its synthesized response. The model tries to extract the answer for citation, but the text is too thin to stand alone. Result: the source gets demoted in selection.

Example of an answer too short:

> "Yes, FAQ schema helps AI citation."

This is technically a direct answer but provides no extractable substance. AIO either ignores it or pads it with content from elsewhere — at which point your FAQ is no longer the citation anchor.

Sweet spot (40-100 words)

40-100 words gives the model enough to extract a complete, contextualized answer. The first sentence is the direct answer. The second and third sentences add the supporting "why" or relevant clarification.

Example of an answer in the sweet spot:

> "FAQ schema dominates AI Overview citation because it removes extraction ambiguity. AI platforms parse the schema directly to identify question-answer pairs, eliminating the need to infer the answer from prose. This reduces citation uncertainty and increases your selection probability for queries matching your question phrasing."

This is 50 words. Direct answer in sentence one. Mechanism explanation in sentences two and three. Compact but contextualized.

Too long (over 200 words)

AIO extracts the first 1-3 sentences from FAQ answers in the vast majority of citations. Anything beyond the first ~150 words gets clipped. Long FAQ answers waste content effort — the additional words don't make it into the citation.

Worse: long answers risk burying the core answer beneath qualifications. AI extraction prefers front-loaded answers. If your direct answer is in sentence five, you've already lost.

The structural pattern that works

First sentence: the direct answer. Affirmative or negative, factual.

Second sentence: the most important supporting detail.

Third sentence (optional): a specific number, example, or named alternative.Three sentences. ~50-80 words. Done.

Factual Density — Specific Claims Beat Platitudes

The most under-appreciated property of citable FAQ content is factual density. AI platforms select sources that include specific, attributable claims because those produce more reliable citations. Vague answers get filtered out at the selection stage.

Vague vs specific — the dimension that matters

- "Many factors influence AI search visibility." — Specific (high citation): "Three factors drive AI search visibility: crawler access, structured data, and content semantic completeness."

- "FAQ schema can help your content rank better." — Specific (high citation): "FAQ schema is the single highest-leverage AIO citation lever — measurable lift in 4-8 weeks across most brand audits."

- "Most brands should consider AI search optimization." — Specific (high citation): "Indian D2C and SaaS brands have the largest GEO opportunity — most have weak SEO foundations and zero existing GEO investment."

- "Update your content regularly for best results." — Specific (high citation): "Refresh

dateModifiedon priority pages every quarter — stale dates depress AIO citation by 20-40% even when content is accurate."

The specific versions contain numbers, named alternatives, time horizons, mechanisms. The vague versions could be written by anyone, about anything. AI extraction selects specifics.

Patterns that increase factual density

- Cite numbers — percentages, time ranges, quantities, prices

- Name alternatives — competitors, complementary tools, comparison points

- Specify mechanisms — "because X causes Y" rather than "this helps"

- State conditions — "when X is true, Y applies"

- Reference data — even informal — "in our audits", "our clients see", "research shows"

Attribution makes claims credible

Sentences structured as "X is the case because Y, according to Z" are more citable than declarative claims without attribution. AI Overview is conservative about inheriting unsupported claims. Pages that show their work get cited preferentially.

You don't need formal academic citations. You need observable patterns of evidence:

- "In our audit of 30 D2C brands…"

- "Industry data shows 62% of AIO-cited pages don't rank in the top 10."

- "Across the 4 major AI platforms…"

These patterns signal "this answer is grounded" — which is what AIO selects for.

How Many FAQ Questions Per Page?

The right FAQ length varies by page type.

- Pillar pages / long guides — FAQ count: 8–15 questions · Reason: Comprehensive coverage of category-level questions

- Cluster posts / mid-length guides — FAQ count: 5–10 questions · Reason: Topic-specific objections and clarifications

- Product pages — FAQ count: 4–7 questions · Reason: Objection-handling, pricing clarity, integration

- Service pages — FAQ count: 4–7 questions · Reason: Scope, timeline, cost, deliverables

- Local landing pages — FAQ count: 4–6 questions · Reason: Hours, accessibility, parking, services offered

- Short pages — FAQ count: 3–5 questions · Reason: Below 3, schema overhead exceeds benefit

Below 3 questions, FAQPage schema is not worth the implementation cost. Above 20, you dilute focus and risk having multiple competing answers — better to split across multiple pages.

The implicit rule: if your FAQ is comprehensive enough that 8 questions feel forced, add fewer. If your FAQ feels thin at 5 questions, add more. Don't pad to hit a count.

Common FAQ Schema Mistakes

The schema is simple. The mistakes that produce zero-citation FAQ pages are recurring.

1. Schema doesn't match visible page content

Google explicitly penalizes this. If your FAQPage schema lists 10 questions but the visible page only shows 3, you fail validation. Schema and visible content must agree word-for-word.

The fix: render the FAQ visibly on the page in addition to embedding the schema. Most FAQPage implementations should have a corresponding visible <details>/<summary> accordion or a structured FAQ section.

2. Generic, undifferentiated answers

Answers that any source could provide get cited less than answers that include specific data, examples, or named alternatives. If your answer reads like it could appear on any competitor's site, rewrite.

3. Q&A format that doesn't actually contain question marks

Some AI parsers care about literal question mark presence. "How to set up GPTBot access" reads like a heading. "How do I set up GPTBot access?" reads like a question. The latter is more reliably parsed. Always end question titles with ?.

4. Overly long answers

Anything over 150 words risks being clipped or skipped. Front-load the direct answer.

5. Stale answers not updated when product/category changes

When your product changes, your FAQ answers go stale. AIO penalizes stale schema. Audit your FAQ content quarterly. If pricing changes, update FAQ answers about pricing. If product features change, update relevant questions.

6. Multiple FAQPage blocks on the same page

Some sites embed FAQPage schema inside multiple page sections — main content, sidebar, footer — each in its own block. This confuses AI parsers about which is canonical. Consolidate into one FAQPage block per page.

7. Schema FAQ questions you wouldn't actually want answered

This is subtle. Some teams add FAQ questions to the schema that handle edge-case objections poorly. "Is it true that your tool doesn't support X?" — answered honestly — surfaces the negative on AI platforms. FAQ schema is a public commitment to those answers. Audit for self-defeating questions.

FAQ Design Patterns by Page Type

Different page types call for different FAQ structures.

Definitional pages — start with definition, then breadth

For pages defining a category-level term (like "What is GEO?"), the FAQ should start with the definitional question, then move outward to related conceptual questions.

Suggested order: 1. What is [term]? 2. How is [term] different from [adjacent term]? 3. Why does [term] matter now? 4. Who needs [term]? 5. How do I get started with [term]?

Comparison pages — comparison-anchored questions, persona-by-persona

For "X vs Y" pages, structure FAQs around comparative buyer questions, organized by buyer persona.

Suggested order: 1. Is X or Y better for [persona A's use case]? 2. Is X or Y better for [persona B's use case]? 3. What does X do that Y doesn't? 4. What does Y do that X doesn't? 5. When should I switch from X to Y?

Product pages — objection-handling first

Product page FAQs should anticipate buyer objections and answer them directly.

Suggested order: 1. How much does [product] cost? 2. Does [product] integrate with [common tool]? 3. How long is the setup process? 4. What if I need help during onboarding? 5. Can I cancel or downgrade?

Service pages — scope and deliverables

Service pages should make the engagement model concrete.

Suggested order: 1. What does [service] include? 2. How long does a typical engagement take? 3. What outputs do I receive? 4. Who on my team needs to be involved? 5. What if the engagement scope changes mid-project?

Local landing pages — practical specifics

For physical-location pages, focus on practical buyer needs.

Suggested order: 1. What are your hours? 2. Where is [location] (with directions)? 3. Is parking available? 4. Do you offer [specific service] at this location? 5. How do I book?

Guide pages — progressive depth

For long-form guides, structure FAQs from basic to advanced so users can find the level matching their existing knowledge.

Suggested order: 1. What is [topic]? 2. How is [topic] different from [related topic]? 3. How do I implement [topic basics]? 4. What are common mistakes with [topic]? 5. How do I measure [topic] working?

Validating That Your FAQ Schema Is Working

Three validation layers, each catching different failures.

1. Google Rich Results Test

search.google.com/test/rich-results — paste your URL. Google will validate the FAQPage schema and show preview of how it might appear in rich results. Critical first check.

2. Schema Markup Validator

validator.schema.org — vendor-neutral schema validation. More lenient than Google's tool. Catches issues Google may not flag.

3. Live AIO citation test

The ultimate validation: run a query that should surface your FAQ in Google AI Overview. If your FAQ is well-designed and the page is indexed, you should see your content cited within 4-8 weeks. If not, there's a deeper issue (crawler access, content quality, competition).

For ongoing monitoring, an AI search visibility tool that captures AIO citations across query sets and tracks citation rate over time is the operational answer. (See How to Measure AI Search Visibility for the full framework.)

Frequently Asked Questions

How many FAQ questions should I include on one page?

For pillar pages and long guides, 8-15 questions. For cluster posts and mid-length pages, 5-10. For product or service pages, 4-7 focused on objection-handling. Below 3 questions, the schema overhead exceeds the benefit.

Can FAQ schema be too long?

Yes. Beyond 20 questions per page, you dilute focus. AI extraction also clips long answers — anything over 150 words per answer gets truncated. Front-load direct answers and use multiple FAQ pages for breadth.

What if my FAQ answers change frequently?

Update them. Stale FAQ answers depress AIO citation. Audit your FAQ content quarterly minimum, more often for fast-moving categories or actively-changing products. The schema's dateModified (on the parent page) signals freshness to AIO.

Should I duplicate the same FAQ across multiple pages?

No. Duplicate FAQ content competes against itself for citation and dilutes AIO selection. If a question is broadly relevant, place it on the most authoritative page for that topic and link to it from related pages.

Will Google rank my FAQ if AI doesn't cite it?

Possibly. FAQPage schema can produce traditional Google rich results (the FAQ accordion-style snippets) even when AIO doesn't cite the page. The two surfaces are evaluated separately. Well-designed FAQ schema lifts both.

Should I use H3 questions or structured FAQPage schema?

Both. The visible page should use H3 question headings with answers below — this is what users read. The FAQPage schema in JSON-LD is the structured representation that AI platforms parse. Schema and visible content must match.

Does FAQ schema help with ChatGPT and Perplexity, not just AI Overview?

Yes. AI Overview is the most directly affected, but ChatGPT and Perplexity also weight FAQ schema in their citation logic. The leverage is highest for AIO; the schema benefits all four major AI platforms.

See Your AIO Surface Rate

FAQ schema is the highest-leverage AIO citation lever. Citare measures the lift — running surface rate audits before and after FAQ schema deployment, isolating the citation impact across query types.

Run your free AI visibility audit → [citare.ai/audit]

See what AI says about your brand

Citare measures your surface rate across ChatGPT, Gemini, Perplexity, and Google AI Overview — and tells you exactly what to fix.

Run your free AI visibility audit →