Guide 3

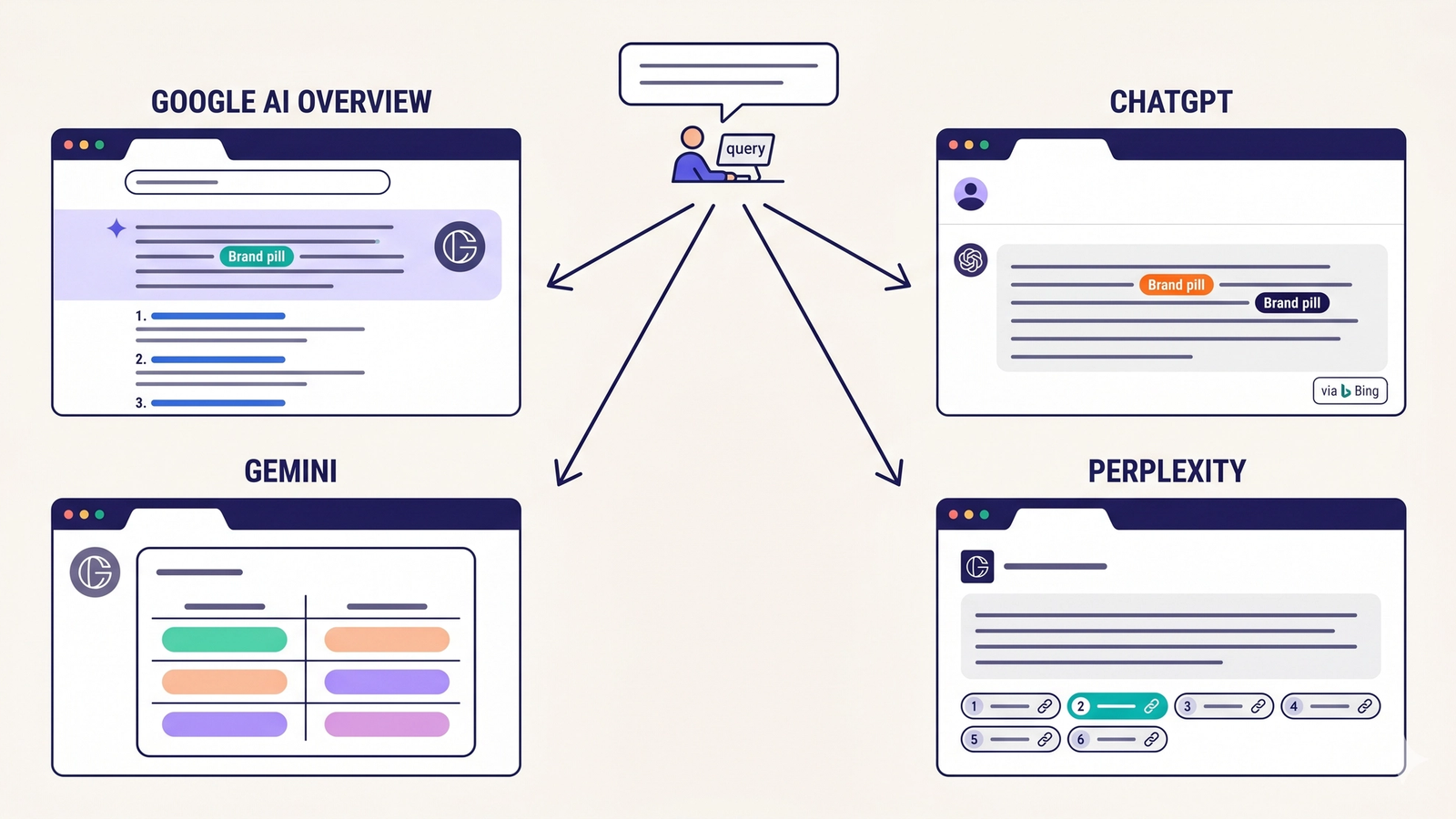

The Four AI Search Platforms Explained: Google AI Overview, ChatGPT, Gemini, and Perplexity

Google AI Overview, ChatGPT, Gemini, and Perplexity each source content differently. The mechanics behind why your brand ranks differently on each.

Last updated: May 2026

Same brand. Same query. Four different answers.

In a brand audit we ran on a single Indian D2C company across 300 AI queries, surface rates broke down like this: 43% on Google AI Overview, 8% on ChatGPT, 29% on Gemini, 12% on Perplexity. Same brand, same product, same week. The asymmetry is not an outlier. It is the structural reality of AI search in 2026.

Most marketing teams talk about "AI search" as if it is one thing. It is not. There are four major platforms with meaningful query volume, each with its own index, its own crawler, its own ranking logic, and its own audience. Being visible on one does not predict visibility on the others.

This guide is the canonical reference for how each of the four platforms works, what makes a brand citable on each, and why the same content can succeed on one and fail on another. If you have not yet read the foundational guides, start with What is Generative Engine Optimization (GEO)? and GEO vs SEO.

There Is No Unified AI Search Index

The mental model most marketing teams import from SEO is wrong for AI search.

In SEO, you optimize once for Google. The other engines (Bing, DuckDuckGo, Yandex) follow similar enough principles that good Google SEO usually translates. Optimization effort is roughly fungible.

In AI search, this fungibility breaks. Each platform has architectural choices that propagate through the entire experience — what gets crawled, what gets cited, how often the index updates, what content format the model prefers. A page perfectly optimized for Google AI Overview can be invisible on ChatGPT because ChatGPT's web search uses Bing's index, and Bing might not have crawled the page yet.

The way to understand AI search is to understand the four platforms as four different products, not four versions of the same thing.

The Four-Index Reality

Every AI search platform answers two architectural questions: what data does the model see (the grounding source), and how does the model decide what to surface (the ranking logic). The grounding source is the most important difference between the platforms.

- Google AI Overview — Grounding source: Google index · Crawler: Googlebot + Google-Extended

- Gemini — Grounding source: Google index (different routing) · Crawler: Googlebot + Google-Extended

- ChatGPT (web search) — Grounding source: Bing index · Crawler: Bingbot (search) + GPTBot (model)

- Perplexity — Grounding source: Perplexity's own index · Crawler: PerplexityBot

Two platforms (AIO and Gemini) share Google's index. Two platforms (ChatGPT and Perplexity) source from elsewhere entirely. This means improving your Google index health gets you 50% of the way on AI search visibility — and zero percent of the rest.

There are also adjacent platforms with smaller volume but growing relevance:

- Anthropic Claude with web search uses Brave Search via API

- Microsoft Copilot is Bing-derived (similar profile to ChatGPT)

- Mistral Le Chat uses its own and partner sources

- You.com uses a combination of search providers

These do not yet move pipeline for most brands but warrant monitoring as their query share grows.

Google AI Overview — Deep Dive

Google AI Overview (AIO) is the AI-generated answer block that appears at the top of a Google search results page. It is rolling out progressively and currently appears on a significant share of informational queries — by mid-2026 the share is over 30% of all Google searches in measured markets.

How it sources

AIO grounds against Google's main search index. The same pages that rank in Google's organic results are the candidate set from which AIO selects citations. However, the selection criteria are different: AIO does not cite based on rank position. It cites based on a separate relevance and synthesis evaluation.

The most important data point about AIO citation: 62% of pages cited in Google AI Overviews do not rank in the top 10 organically for the same query. That is a structural decoupling. Pages that win AIO citations have characteristics — semantic completeness, structured Q&A formatting, clear factual claims, schema markup — that diverge from pure rank-optimized pages.

Geo-contextualization

AIO is geo-aware. The same query from Bangalore, Mumbai, and Delhi can produce different brand citations within the same answer block. This is most visible for queries with local intent — restaurants, services, retail, healthcare — but applies subtly to others as well. Brands with strong national Google presence but weak local structured data (LocalBusiness JSON-LD, consistent NAP across directories, Google Business Profile completeness) drop out of city-level AIO surfaces.

What AIO rewards

In our brand audits, the pages most consistently cited in AIO share this profile:

- FAQ schema with explicit question-answer pairs

- H2/H3 structure that mirrors common search query phrasing

- Tables and lists for comparative or enumerated information

- Recent

dateModified(freshness signals matter for AIO more than for organic rank) - Organization schema with comprehensive properties

- Author byline with E-E-A-T-supportive context

- Inline factual claims with attribution

What blocks AIO citation

Google-Extendedblocked in robots.txt — the most common cause of AIO invisibility- Content locked in images (PNG cards, infographics) — AIO does not OCR at citation time

- JavaScript-rendered content that does not pre-render — AIO uses a different crawl path than organic Google

- Thin or duplicated content — AIO heavily penalizes both

- No structured data — AIO can still cite plain pages but the lift required is much higher

How to be a Google AI Overview tracking tool target

If you are building infrastructure to monitor AIO citations, the requirement is screenshot-based capture of the SERP, geo-seeded for the markets you care about, parsed for the AI Overview block, and tracked over time. Querying Google's API does not return AIO content — it has to be captured from the live SERP UI. Citare's monitoring runs a Playwright-based AIO capture pipeline with vision-parse extraction for exactly this reason.

ChatGPT — Deep Dive

ChatGPT is the most-used AI assistant globally, with usage measured in the hundreds of millions of weekly active users. For brand visibility, ChatGPT operates in two distinct modes that need to be understood separately.

Mode 1 — trained-knowledge

The base ChatGPT model produces responses from its training data. The training data has a cutoff date, and knowledge of brands depends on whether the brand appeared with sufficient frequency in the training corpus before that date. Very new brands, recently rebranded brands, or brands without significant English-web presence may be entirely absent from base ChatGPT responses regardless of current SEO or website quality.

This is why some founders find their company invisible to ChatGPT for the first 12-18 months of operation even with strong PR — the training corpus is the only source, and the next training cycle has not happened yet.

Mode 2 — web search (live)

When a user enables web search or asks a query that triggers ChatGPT's browse capability, the model issues real-time queries against Bing's search API and grounds its response in the returned results. This is the mode that matters for most brand visibility work because it is decoupled from training cutoffs.

The critical fact: ChatGPT's web search uses Bing's index, not Google's. A brand that is well-indexed on Google but poorly indexed on Bing will be invisible to ChatGPT web search regardless of Google rank.

The Indian brand Bing-coverage gap

Indian brands are historically under-indexed on Bing relative to their US-equivalent counterparts. Bingbot has thinner coverage of .in domains, fewer Bing Webmaster Tools-submitted sitemaps, and the cultural pattern of optimizing exclusively for Google. The result: a class of Indian brands that rank well on Google and are entirely missing from ChatGPT.

The fix is unglamorous and high-leverage:

- Submit your sitemap to Bing Webmaster Tools

- Verify Bingbot is allowed in robots.txt

- Monitor your Bing index coverage monthly

- Where coverage is thin, request indexing of priority pages directly through BWT

For most Indian brands this single workflow — taking a few hours total — produces a meaningful uplift in ChatGPT brand mentions monitoring within 4-8 weeks.

GPTBot vs Bingbot

ChatGPT's relationship with web crawlers is also split:

- GPTBot — OpenAI's crawler used to gather training data for future model versions. Allowing GPTBot affects whether your content can become part of trained-knowledge in subsequent training cycles.

- Bingbot — Microsoft's search crawler. Allowing Bingbot affects whether your content can be retrieved during ChatGPT's live web search.

You need both allowed for full ChatGPT visibility — GPTBot for the long-term training horizon, Bingbot for the immediate live-search horizon.

ChatGPT memory

ChatGPT's memory feature stores user-specific context across sessions. For brand visibility this introduces a subtle but real signal: if a user previously interacted positively with a brand or topic in ChatGPT, the model's later responses to related queries are biased toward that brand. This makes individual user-level brand tracking less generalizable than aggregate audit measurement, and it is why persona-anchored dispatch — running queries from a clean context with a defined persona — is critical for unbiased measurement.

What blocks ChatGPT citation

- GPTBot blocked in robots.txt (training horizon)

- Bingbot blocked in robots.txt or thin Bing crawl coverage (live-search horizon)

- Content in JavaScript-rendered components — Bing's render budget is much smaller than Google's

- No Bing Webmaster Tools submission

- Content depth that fails the "would I cite this" test — ChatGPT's citation logic favors comprehensive, source-quality pages over thin keyword-targeted ones

How to get mentioned in ChatGPT

In order of leverage:

- Verify GPTBot and Bingbot allowed in robots.txt

- Submit and verify on Bing Webmaster Tools

- Audit Bing index coverage of your priority pages

- Add Organization, FAQPage, and Product schema to relevant pages

- Publish source-quality content (data, research, comprehensive guides)

- Build named-comparison content for your category — ChatGPT cites comparisons heavily

Gemini — Deep Dive

Gemini is Google's AI assistant. It grounds against Google's index (the same source as AIO) but uses different routing logic and emphasizes different signals when synthesizing answers.

Why Gemini is structurally different from AIO

Both platforms read from Google's index, so they share crawlability and indexing requirements. The differences emerge in selection and synthesis:

- Comparison aggressiveness — Gemini surfaces named-competitor content much more aggressively than AIO. Asking Gemini "is Brand A or Brand B better for X" routinely produces side-by-side comparisons with brand recommendations. AIO is more conservative with named recommendations.

- Knowledge Graph weighting — Gemini relies more on Google's Knowledge Graph for entity recognition than AIO does. Brands with strong Knowledge Graph presence (Wikipedia entry, sameAs links, knowledge panels in Google search) get cited more reliably.

- Workspace integration — Gemini is increasingly embedded in Google Workspace (Docs, Gmail, Drive) and Search. This shifts a portion of brand discovery from explicit "search" to ambient "while doing other work."

- Conversational state — Gemini retains conversational state more aggressively than AIO across multi-turn queries, allowing for follow-up brand discovery in ways AIO does not.

What Gemini rewards

In brand audits, the patterns most predictive of strong Gemini visibility:

- Wikipedia presence (or strong functional equivalent —

aboutpage with structured Organization schema) sameAslinks pointing to canonical entity references (LinkedIn company page, Crunchbase, Twitter/X, official social channels)- Comparison content — pages that name competitors and compare features, pricing, use cases

- Structured FAQ content

- Recent activity signals (publishing cadence, fresh data, updated pages)

Gemini brand monitoring

Tracking Gemini citations requires a different approach than AIO. Gemini's responses are not embedded in a SERP — they appear in a chat interface. Citation requires real query dispatch, persona-anchored, with parsed extraction of cited brands and their context (recommended, compared favorably, mentioned as alternative, etc.). The same brand can be mentioned across all four classes within a single Gemini response, and the class matters as much as the mention.

What blocks Gemini citation

- Google-Extended blocked

- Weak entity graph (no Wikipedia, sparse sameAs, missing Knowledge Graph signals)

- No comparison content for your category

- Generic content that does not differentiate the brand

- JavaScript-only rendering

Perplexity — Deep Dive

Perplexity is the most directly measurable of the four AI search platforms because it cites sources inline with linkable URLs. Every Perplexity answer includes a list of source citations, often numbered and linked, making Perplexity citation tracking closer to backlink tracking than other AI platforms.

How Perplexity sources answers

Perplexity runs its own crawler called PerplexityBot and builds its own search index. It is independent of Google and Bing. Perplexity supplements its index with real-time web search where the index is thin.

This independence means Perplexity citation patterns can diverge sharply from the other three platforms. A brand can be invisible across all of Google's surfaces and still appear on Perplexity if PerplexityBot has crawled the brand's site and the content matches Perplexity's source-quality criteria.

What Perplexity rewards

Perplexity is the platform most aligned with what content marketers historically thought of as "thought leadership content":

- Original data and research (the strongest signal)

- Comprehensive guides on a topic

- Structured comparison and how-to content

- Clear authorship and credibility signals

- Recent content (Perplexity weights freshness heavily)

- Source-quality formatting — clean paragraphs, scannable structure, clear factual claims

Audience skew

Perplexity's user base skews technical, professional, and research-oriented. Engineers, analysts, B2B decision-makers, journalists, students, and academics are over-represented relative to general consumer audiences. This is meaningful for brand visibility strategy: Perplexity is disproportionately important for B2B SaaS, enterprise software, professional services, finance, and research-intensive verticals. It is less critical for direct-to-consumer retail or local services.

Pro vs free routing

Perplexity Pro users get access to "Pro Search" which routes through more sophisticated model variants and conducts more thorough source aggregation per query. Pro queries often surface a broader source set and cite more brands per answer. Free queries are more constrained. Brand visibility tracking should account for both routes — surface rates can differ substantially.

Perplexity Pages

Perplexity's "Pages" feature generates standalone pages from Perplexity's synthesis of multiple sources, with full source attribution. When your brand is one of the cited sources on a Perplexity Page, you receive both citation and indirect referral traffic from users who navigate from the Page to your site. Pages that go viral on Perplexity drive measurable referral traffic for cited brands.

How Perplexity indexes websites

PerplexityBot follows standard crawl patterns: respects robots.txt, follows internal links, indexes parseable HTML. To improve Perplexity indexing:

- Allow PerplexityBot explicitly in robots.txt

- Maintain a clean sitemap.xml

- Render core content in HTML (not behind JavaScript-only rendering)

- Publish source-quality content (long-form, data-rich, well-structured)

- Build inbound links from authoritative sources — Perplexity's crawler discovers content through standard web link following

How to appear in Perplexity AI

In order of leverage:

- Allow PerplexityBot in robots.txt

- Publish original research, data, or comprehensive guides

- Maintain comparison content for your category

- Verify HTML-renderable content (no JS-only critical content)

- Cross-link your content internally — strong internal linking helps PerplexityBot discover the full content footprint

- Build authoritative inbound backlinks — these matter more for Perplexity than for AIO or Gemini

What blocks Perplexity citation

- PerplexityBot blocked in robots.txt

- JavaScript-rendered critical content

- Thin or shallow content

- No clear authorship or credibility signals

- No comparison or research-quality material

A proper Perplexity brand visibility tracker needs to dispatch real queries against Perplexity's API or interface, parse the cited source list, and attribute mentions to your brand. Citation context (was your brand a primary source, a comparison reference, or a passing mention) matters and should be captured in the dataset.

The Four Also-Rans

Four additional AI assistants are worth tracking but do not yet move pipeline for most brands:

Anthropic Claude — Claude with web search uses Brave Search via API. Brave's index is independent of both Google and Bing and has thinner coverage. Brand visibility on Claude correlates roughly with Brave Search visibility. Claude's user base is meaningful in technical and AI-research circles; less so for general consumer markets.

Microsoft Copilot — Copilot is Bing-derived and produces brand visibility patterns close to ChatGPT's web search. If you optimize for ChatGPT, Copilot follows.

Mistral Le Chat — Mistral's chat assistant uses a combination of its own training data and partner search providers. Limited by training cutoff and smaller user base.

You.com — Combines multiple search providers and has its own index. Smaller user base, primarily power users.

For most brands, optimizing for the four primary platforms (AIO, ChatGPT, Gemini, Perplexity) covers 95%+ of measurable AI search query volume in 2026. The also-rans become important as their share grows or for specific niches.

Why Your Brand Ranks Differently on Each AI Platform

When we measure brand visibility across all four platforms, three archetype patterns recur:

Archetype 1: The Google-strong, Bing-weak brand

High AIO surface rate, high Gemini surface rate, low ChatGPT surface rate, low-to-medium Perplexity surface rate. Common in Indian D2C brands, smaller US brands that ignored Bing for a decade, and any brand that built its SEO playbook exclusively around Google.

The fix is Bing-side infrastructure: BWT submission, Bingbot allowed, sitemap pushed, priority pages indexed manually through BWT. ChatGPT visibility improves within 4-8 weeks of fixing the Bing gap.

Archetype 2: The data-rich, entity-thin brand

High Perplexity surface rate, low Gemini surface rate, medium AIO and ChatGPT. Common in data-led B2B SaaS brands that publish strong original research but have weak entity-graph footprint (no Wikipedia, sparse sameAs, missing Knowledge Graph panel).

The fix is entity work: pursue Wikipedia presence where appropriate, comprehensive Organization schema with full sameAs links, knowledge panel claim, regular brand-name press coverage to strengthen entity recognition signals.

Archetype 3: The balanced brand

Roughly even surface rates across all four platforms. This is rare and usually the result of deliberate four-platform GEO investment. The brands that achieve this profile have done all the foundational work: crawler allow lists, JSON-LD on every priority page, structured comparison content, original research, Wikipedia + Knowledge Graph entity recognition, and per-platform measurement that catches drift.

This is the goal state. Most brands today are in archetype 1 or 2.

The Measurement Implication

The most important consequence of the four-platform reality: you cannot generalize across platforms. Aggregate AI surface rate is a weak metric. The useful metric is a four-row breakdown — surface rate per platform — combined with citation context per mention.

This is also why traditional SEO platforms do not produce useful AI search measurement. Tools like Semrush, Ahrefs, and BrightEdge were built around Google rank tracking and have no native architecture for dispatching real queries against multiple AI platforms with persona context. A brightedge AI search alternative or AI search monitoring alternative to Semrush is a structurally different product, not a feature add-on. The data model is different, the dispatch infrastructure is different, the metrics are different.

A proper AI search visibility tracker does five things that traditional SEO tools cannot:

- Dispatches real queries to all four AI platforms, not proxy signals

- Runs queries with multiple personas to capture variance

- Parses platform-specific response formats (AIO HTML block, ChatGPT chat output, Gemini chat output, Perplexity citation list)

- Computes per-platform surface rate and citation context

- Benchmarks against named competitors in the same category

This is the architecture Citare was built around. It is also the structural reason most teams are flying blind on AI visibility — the category of tooling required to see clearly on this surface barely existed two years ago.

How to Be Visible on All Four Platforms

A practical checklist for brands targeting balanced four-platform visibility:

Crawler access (all four)

- Allow GPTBot, ClaudeBot, PerplexityBot, and Google-Extended in robots.txt

- Verify with each platform's user-agent test

Bing infrastructure (ChatGPT)

- Submit sitemap to Bing Webmaster Tools

- Verify domain ownership

- Manually index top 20 priority pages

- Monitor Bing index coverage monthly

Structured data (all four)

- Organization schema on homepage with full sameAs array

- LocalBusiness schema if physical presence

- Product schema on product pages

- FAQPage schema on Q&A content

- Article schema on guides and research posts

- HowTo schema on procedural content

Entity graph (Gemini, AIO)

- Wikipedia presence (where editorially appropriate)

- LinkedIn company page completeness

- Crunchbase profile

- Knowledge panel claim in Google

- Consistent NAP across all directories

Content depth (all four, Perplexity especially)

- Original research and data

- Comprehensive topic coverage (pillar + cluster architecture)

- Named-comparison content

- Updated/refreshed content with

dateModified - Clear authorship with E-E-A-T signals

Measurement (operating layer)

- Per-platform surface rate tracked monthly

- Persona-anchored query dispatch

- Named competitor benchmarking

- Citation context attribution

A brand that completes this checklist will move out of archetype 1 or 2 and toward archetype 3 over a 90-180 day window.

Frequently Asked Questions

Why does ChatGPT not see my brand if I rank #1 on Google?

ChatGPT's web search grounds against Bing's index, not Google's. A brand can be #1 on Google and missing from Bing. The fix is Bing Webmaster Tools submission, ensuring Bingbot is allowed in robots.txt, and verifying Bing has crawled and indexed your priority pages.

How often do AI platforms reindex content?

Each platform has different cadences. Google AI Overview and Gemini both source from Google's index, which reindexes frequently — within hours to days for established sites. ChatGPT's web search uses Bing's index, which reindexes less frequently — days to weeks. Perplexity's own crawler sets its own pace, typically weekly to monthly for established sites. Trained-knowledge updates (the part of ChatGPT not using web search) lag by training cycle, often 6-12 months.

Is GPTBot the same as Bingbot?

No. GPTBot is OpenAI's crawler used to gather training data for future ChatGPT model versions. Bingbot is Microsoft's search crawler used to power Bing search results, including the search results that ChatGPT uses for its web search feature. You need both allowed for full ChatGPT visibility.

Does Perplexity use Google's index?

No. Perplexity runs its own crawler (PerplexityBot) and builds its own index. It is independent of both Google and Bing. This is why a brand can be invisible on Google and still appear on Perplexity if Perplexity has crawled the site and the content meets Perplexity's source-quality criteria.

What is the difference between Gemini and Google AI Overview?

Both source from Google's index, but they are distinct products. Google AI Overview is the AI-generated answer block at the top of a Google search results page. Gemini is Google's standalone AI assistant available at gemini.google.com and integrated into Workspace. Gemini surfaces named-competitor comparisons more aggressively than AIO, weights Knowledge Graph signals more heavily, and retains conversational state across turns.

Should I optimize for all four platforms or focus on one?

For most brands, all four. The structural choice is not which platform to optimize for but how to allocate effort across them. Foundational work (crawlability, structured data, content depth) helps all four simultaneously. Platform-specific work (Bing for ChatGPT, Knowledge Graph for Gemini, original research for Perplexity, FAQ schema for AIO) compounds when done in parallel.

How do I get cited by Perplexity?

Allow PerplexityBot in robots.txt, publish original research and data, maintain comprehensive content, render core content in HTML (not JavaScript-only), and build authoritative inbound backlinks. Perplexity rewards source-quality content more than any other AI platform.

Does Wikipedia presence help on Gemini?

Yes, significantly. Gemini relies more on Google's Knowledge Graph for entity recognition than the other AI platforms. A Wikipedia entry strengthens Knowledge Graph entity signals and produces measurable improvement in Gemini citation. Where editorial Wikipedia presence is not yet warranted, comprehensive Organization schema with sameAs links to canonical entity references (LinkedIn, Crunchbase, social accounts) is the closest functional equivalent.

What is ChatGPT Memory and does it affect brand visibility?

ChatGPT Memory stores user-specific context across sessions. For brand tracking this means individual user responses can be biased by their prior interactions. Aggregate measurement requires persona-anchored dispatch from clean contexts to avoid memory contamination of results.

How long does it take for AI platforms to learn about a new brand?

Web-search modes (AIO, Gemini, ChatGPT web search, Perplexity) update within days to weeks of new content publishing, depending on crawl cadence. Trained-knowledge modes (the non-web-search portion of ChatGPT, Mistral, etc.) lag by full training cycles — often 6-12 months. New brands should expect 4-8 weeks for web-search visibility and longer for trained-knowledge inclusion.

See Your Surface Rate Across All Four Platforms

You cannot improve what you cannot see. Citare measures your brand across Google AI Overview, ChatGPT, Gemini, and Perplexity — running persona-anchored query dispatches, computing per-platform surface rates, and benchmarking against named competitors in your category.

Run your free AI visibility audit → [citare.ai/audit]

See what AI says about your brand

Citare measures your surface rate across ChatGPT, Gemini, Perplexity, and Google AI Overview — and tells you exactly what to fix.

Run your free AI visibility audit →