Guide 103

Why Your Brand Ranks Differently on Each AI Platform

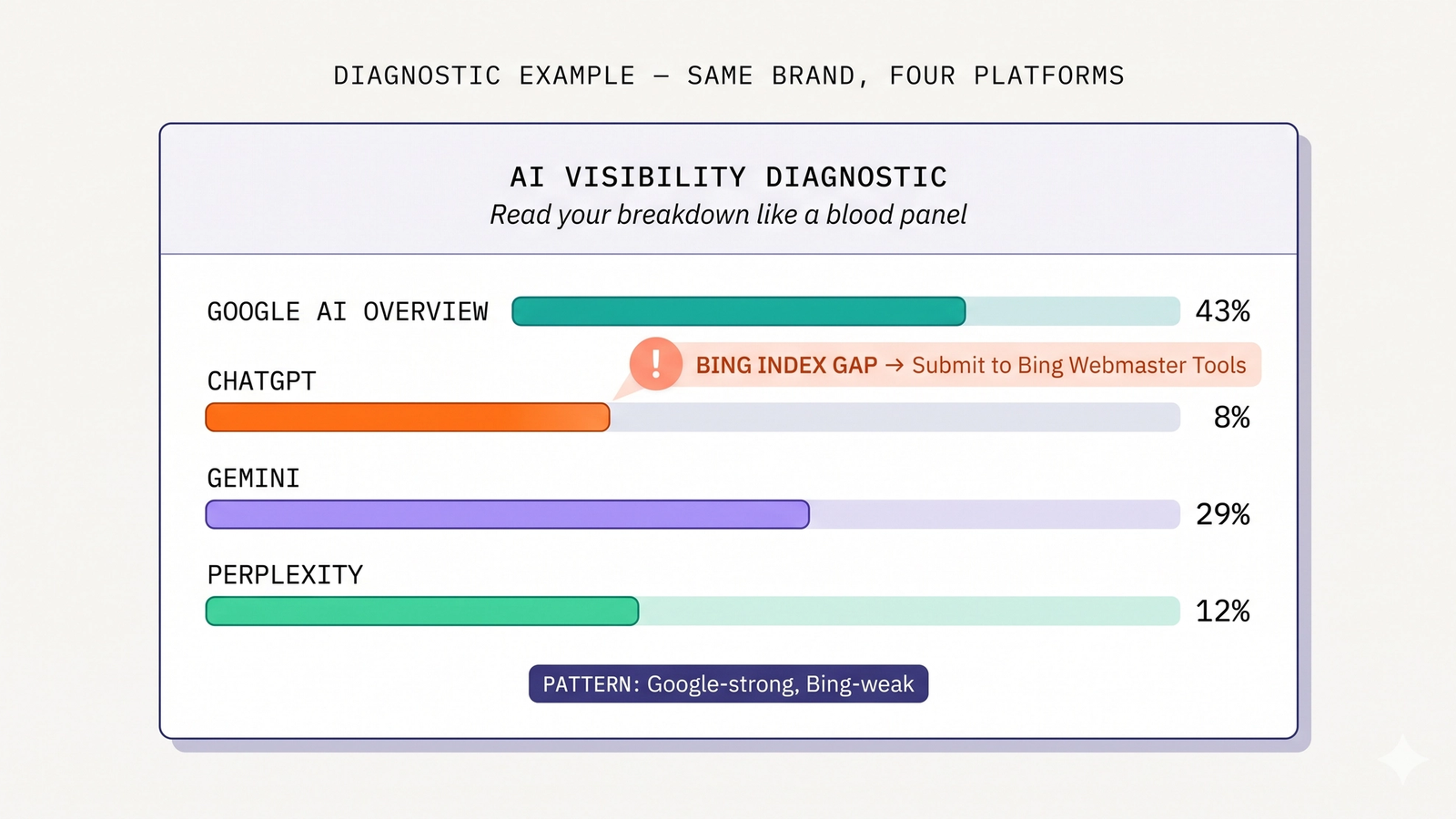

Same brand, four AI platforms, four different surface rates. Five diagnostic patterns and what each one tells you to fix.

Last updated: May 2026

When you measure your brand across all four AI platforms, the surface rates don't match. They never match.

A brand might show 43% on Google AI Overview, 8% on ChatGPT, 29% on Gemini, 12% on Perplexity. Same brand, same week, same query set. The asymmetry is not measurement noise. It's structural — and it tells you something specific about what's broken in your AI search optimization.

The asymmetry is also actionable. Once you can read the per-platform breakdown, the data points to specific interventions. A weak ChatGPT surface rate next to a strong AI Overview rate has a different fix than a weak Gemini rate next to a strong Perplexity rate.

This guide is the diagnostic. Five archetype patterns, each with the underlying cause and the specific fix. Read your platform-by-platform breakdown like a doctor reads a blood panel. (For the per-platform mechanics that produce these patterns, see How ChatGPT Decides What to Recommend, How Gemini Indexes Brands, and How Perplexity Sources Answers.)

The Asymmetry Is Structural, Not Random

Each AI platform sources from a different index, weights different signals, and serves a different audience. The mechanism is the same as why one brand might rank #1 in Spanish-language search and #15 in English — different scoring functions over different inputs produce different outputs.

The four scoring functions:

- Google AI Overview — sources Google's index; weights structured data, FAQ schema, freshness, semantic completeness; geo-aware

- ChatGPT — sources Bing's index (web search mode) plus OpenAI training data (offline mode); weights Bing rank, third-party source mentions, content depth

- Gemini — sources Google's index; weights Knowledge Graph entity strength, comparison content, conversational coherence; Workspace integration matters

- Perplexity — sources its own index; weights original research, source-quality content, recent dateModified, backlinks (more than other platforms)

Same content, four different scoring runs, four different surface rates. The variance is not a bug. It's the system working as designed.

(For the deep mechanics of each platform, see The Four AI Search Platforms Explained.)

Five Diagnostic Patterns

In our brand audits, five archetype patterns recur often enough to be diagnostic:

- Strong AIO, weak ChatGPT — the most common pattern. Bing index gap.

- Strong AIO, weak Gemini — entity graph weakness.

- Strong Perplexity, weak other three — research-led brand without foundation health.

- Weak across all four — foundation issues (robots.txt, JS rendering, image-locked content).

- Strong on three, weak on one — specific platform-side issue.

Each archetype has a specific cause and a specific fix. The next sections walk through each.

Diagnostic 1: Strong AIO, Weak ChatGPT

Pattern: AI Overview surface rate is solid (15-30%+); ChatGPT surface rate is conspicuously low (under 10%); Gemini and Perplexity sit somewhere in between.

Cause: Bing index gap. ChatGPT's web search grounds against Bing, not Google. Your Google index health is fine, which is why AI Overview surfaces you. Bing's coverage of your domain is weak, which is why ChatGPT misses you.

This is the most common pattern in our audits, particularly for Indian brands and US-equivalent SMBs whose SEO programs ignored Bing for a decade. The cultural pattern of optimizing exclusively for Google has produced a generation of brands with strong Google footprints and thin Bing presence.

Diagnostic signals:

- AIO surface rate >15%

- ChatGPT surface rate <10%

- The gap between AIO and ChatGPT is more than 2x

- Bing Webmaster Tools shows missing or low-coverage indexing of priority pages

Fix:

- Submit your sitemap to Bing Webmaster Tools immediately

- Verify Bingbot is allowed in robots.txt (see AI Crawler Access Guide)

- Manually request indexing of top 20 priority pages through Bing Webmaster Tools

- Audit Bing index coverage monthly

Time to effect: 4-8 weeks. Bing's reindexing cycle is the gating factor. Brands typically see ChatGPT surface rate move from sub-10% to 15-25% within 8 weeks of the fix.

Diagnostic 2: Strong AIO, Weak Gemini

Pattern: AI Overview surface rate is solid; Gemini surface rate is materially lower (sometimes 30-50% of AIO rate); ChatGPT and Perplexity vary.

Cause: Entity graph weakness. AIO and Gemini both source from Google's index, but Gemini relies more heavily on Google's Knowledge Graph for entity recognition. A brand with weak Knowledge Graph signals — no Wikipedia entry, sparse sameAs array, no claimed knowledge panel — gets cited less reliably on Gemini than on AIO even though the underlying index is the same.

This pattern appears for brands that have invested in SEO and content but neglected entity-graph signals. Knowledge Graph deployment requires different work than schema or content.

Diagnostic signals:

- AIO surface rate >20%

- Gemini surface rate 50% or less of AIO rate

- No Wikipedia entry (or stub-only entry)

- Organization JSON-LD

sameAsarray has fewer than 4-5 entries - No claimed Google knowledge panel

- Inconsistent NAP data across directories

Fix:

- Audit Organization JSON-LD

sameAsarray — aim for 5-10 canonical references - Verify all

sameAsURLs are canonical (not redirects, not stale) - Build presence on Crunchbase, LinkedIn Company Page, Wikidata if eligible

- Pursue Wikipedia eligibility long-term (notable + sourced + verifiable)

- Claim Google knowledge panel if Google has built one

Time to effect: 8-16 weeks. Knowledge Graph entity strength compounds slowly. (See How Gemini Indexes Brands for the entity-graph playbook.)

Diagnostic 3: Strong Perplexity, Weak Other Three

Pattern: Perplexity surface rate is high (25-40%+); AIO, ChatGPT, and Gemini are all lower. Often the brand has no Wikipedia entry, no claimed knowledge panel, and below-average Bing presence.

Cause: Research-led brand without foundation health. The brand publishes high-quality original content (Perplexity's preferred input) but hasn't invested in foundational AI search infrastructure (Google-Extended allowance, Bing Webmaster Tools, comprehensive structured data, knowledge panel, sameAs deployment). Perplexity sees the content and cites it because Perplexity rewards source-quality material. The other three platforms can't see the content as easily because foundation work is incomplete.

This pattern is more common in B2B SaaS, research firms, and content-heavy brands than in D2C or local services.

Diagnostic signals:

- Perplexity surface rate >25%

- AIO surface rate <15%

- ChatGPT surface rate <10%

- Gemini surface rate <15%

- Brand has substantial original content (research, data, comprehensive guides)

- robots.txt is mostly clean for PerplexityBot but may have

Google-Extendedblocked - Bing Webmaster Tools submission missing or stale

Fix:

- Verify Google-Extended allowed in robots.txt

- Submit to Bing Webmaster Tools

- Deploy comprehensive Organization JSON-LD with full sameAs

- Add FAQPage schema to top priority pages

- Pursue knowledge panel claim

The good news: this pattern is the easiest to fix because the content foundation is already strong. The fixes are infrastructure work that produces measurable lift on AIO/ChatGPT/Gemini within 4-12 weeks.

Diagnostic 4: Weak Across All Four

Pattern: Surface rates of 5% or less on every platform. The brand might have decent Google rank, but no AI surface presence to match.

Cause: Foundational issues. Common combinations:

Google-Extendedblocked in robots.txt (kills AIO + Gemini)PerplexityBotblocked (kills Perplexity)- Bing Webmaster Tools never submitted (kills ChatGPT)

- JS-only rendering of critical content (kills all four)

- Image-locked brand differentiators (kills extraction across all four)

- Thin or shallow content (kills citation eligibility)

- No structured data deployment

This is the "AI search optimization not started" archetype. Most brands in 2026 are still here.

Diagnostic signals:

- All four platforms below 10% surface rate

- robots.txt audit reveals one or more AI crawler blocks

- Bing Webmaster Tools shows zero or near-zero indexed pages

- Limited or zero JSON-LD on priority pages

- Critical content rendered client-side via JavaScript

Fix: the foundation playbook from How to Appear in Google AI Overview, executed across the priorities for all four platforms. The 9-action priority list applies here.

Time to effect: 8-16 weeks for substantial change. Brands typically move from <5% to 15-25% across all four platforms within 16 weeks of executing the foundation playbook.

Diagnostic 5: Strong on Three, Weak on One

Pattern: Three platforms show solid surface rates; one platform conspicuously lags.

Cause: Specific platform-side issue. Each platform's lag points to a specific fix:

- Weak ChatGPT only — Bing index gap (see Diagnostic 1)

- Weak AIO only — Google-Extended blocked or AIO-specific selection issue (FAQ schema missing, dateModified stale)

- Weak Gemini only — entity graph weakness (see Diagnostic 2)

- Weak Perplexity only — PerplexityBot blocked, JS-only critical content, or thin source-quality material

This pattern is uncommon but high-leverage when it appears. The fix is targeted rather than systemic.

Diagnostic signals:

- Three platforms show comparable surface rates (within 50% of each other)

- One platform sits at half or less of the others' average

- The brand has done foundation work generally but missed one specific input

Fix: target the underperforming platform's specific lever. (See the relevant platform deep-dive for the playbook.)

How to Use the Diagnostic

The right operating cadence:

- Measure. Get a per-platform breakdown of surface rate, persona-anchored, with at least 50 queries per persona. (See How to Measure AI Search Visibility for the framework.)

- Identify the archetype. Match your numbers against the five patterns above. The clearest fit is usually obvious.

- Prioritize the fix. Each archetype has a specific intervention with known time-to-effect. Schedule the fix.

- Re-measure in 30-60 days. Check whether the fix moved the platform you targeted. If yes, move to the next-largest gap. If no, the diagnosis was wrong — revisit.

- Repeat. Most brands cycle through 2-3 archetype patterns over 6-12 months as they close the gaps.

The diagnostic is iterative. You won't fix all patterns at once. You'll close one, reveal the next, close that. Surface rate compounds toward "balanced" archetype over 12-18 months of disciplined work.

Frequently Asked Questions

How do I get the per-platform breakdown to start with?

Run a structured query test. Pick 50-100 representative queries from your category. Dispatch them through Google AI Overview, ChatGPT, Gemini, and Perplexity with persona context. Parse responses for brand mentions. Compute surface rate per platform. (See How to Measure AI Search Visibility for the full methodology.) Tools like Citare automate this end-to-end.

Should I optimize for the platform I'm weakest on or strongest on?

Both, but in different ways. The weakest platform usually has a specific structural cause (Bing gap, entity graph, etc.) — fixing that is high-leverage. The strongest platform has working momentum — extending it (more comparison content, more original research) compounds the advantage. Don't fix only one or only the other.

Why do my surface rates change month-to-month?

Three normal sources of variation. (1) AI platforms reindex and reevaluate over crawl and training cycles — surface rate can shift up or down 3-5 percentage points naturally. (2) Competitor moves — a competitor's GEO investment can take share from you on shared queries. (3) Query set drift — if your query set shifts seasonally or as the category evolves, surface rate moves with it. Persistent month-over-month decline of 10+ percentage points is the actionable signal; small variation is noise.

How do I know if a fix is working?

Re-run the same audit 30-60 days after the fix. The platform you targeted should show measurable lift. If yes, the diagnosis was correct. If no — try a 2-month window before concluding the fix didn't work, since reindex cycles vary. Persistent no-movement at 60 days suggests a different underlying cause.

Can a brand realistically be strong on all four platforms?

Yes — the "balanced" archetype is achievable, but it requires deliberate four-platform work. In our audits, brands achieving 25%+ surface rate on all four platforms have all done: comprehensive crawler access, comprehensive structured data, multi-platform comparison content, original research, knowledge graph entity work, and continuous measurement. It's not one trick; it's a sustained program. Most brands today are in archetypes 1-3.

Which archetype is fastest to fix?

Archetype 1 (Strong AIO, Weak ChatGPT) is usually the fastest. Bing Webmaster Tools submission is 5 minutes. Bingbot allowance is one line in robots.txt. Index reindex takes 4-8 weeks but the work is trivial. Most brands move ChatGPT surface rate from sub-10% to 15-25% within two months of fixing this single issue.

Which archetype takes the longest to fix?

Archetype 2 (Strong AIO, Weak Gemini) is typically the slowest because Knowledge Graph entity strength compounds over months. Wikipedia presence (where eligible) takes time. SameAs deployment helps but the full effect comes from third-party signal accumulation. Plan for 8-16 weeks of work and 12-24 weeks for full compounding.

Diagnose Your AI Visibility Patterns

Citare runs persona-anchored audits across all four AI platforms, computes per-platform surface rate, identifies your archetype pattern, and recommends the highest-leverage fix. The diagnostic is the starting point for a measurable optimization program.

Run your free AI visibility audit → [citare.ai/audit]

See what AI says about your brand

Citare measures your surface rate across ChatGPT, Gemini, Perplexity, and Google AI Overview — and tells you exactly what to fix.

Run your free AI visibility audit →